Integrate AI into my product

Transform your product with AI.

We support you in transforming your product and integrating AI: audit, advisory, software development and maintenance.

Our approach

Audit

We identify the business goals and constraints with you: audience, problem to solve, market, functional needs. We write a spec that aligns our visions.

Advisory

Deep state-of-the-art research on existing technologies (LLMs, NLP, OCR…) to propose the solutions best matched to your situation.

Development

We build the AI integration into your product in close collaboration with your technical teams.

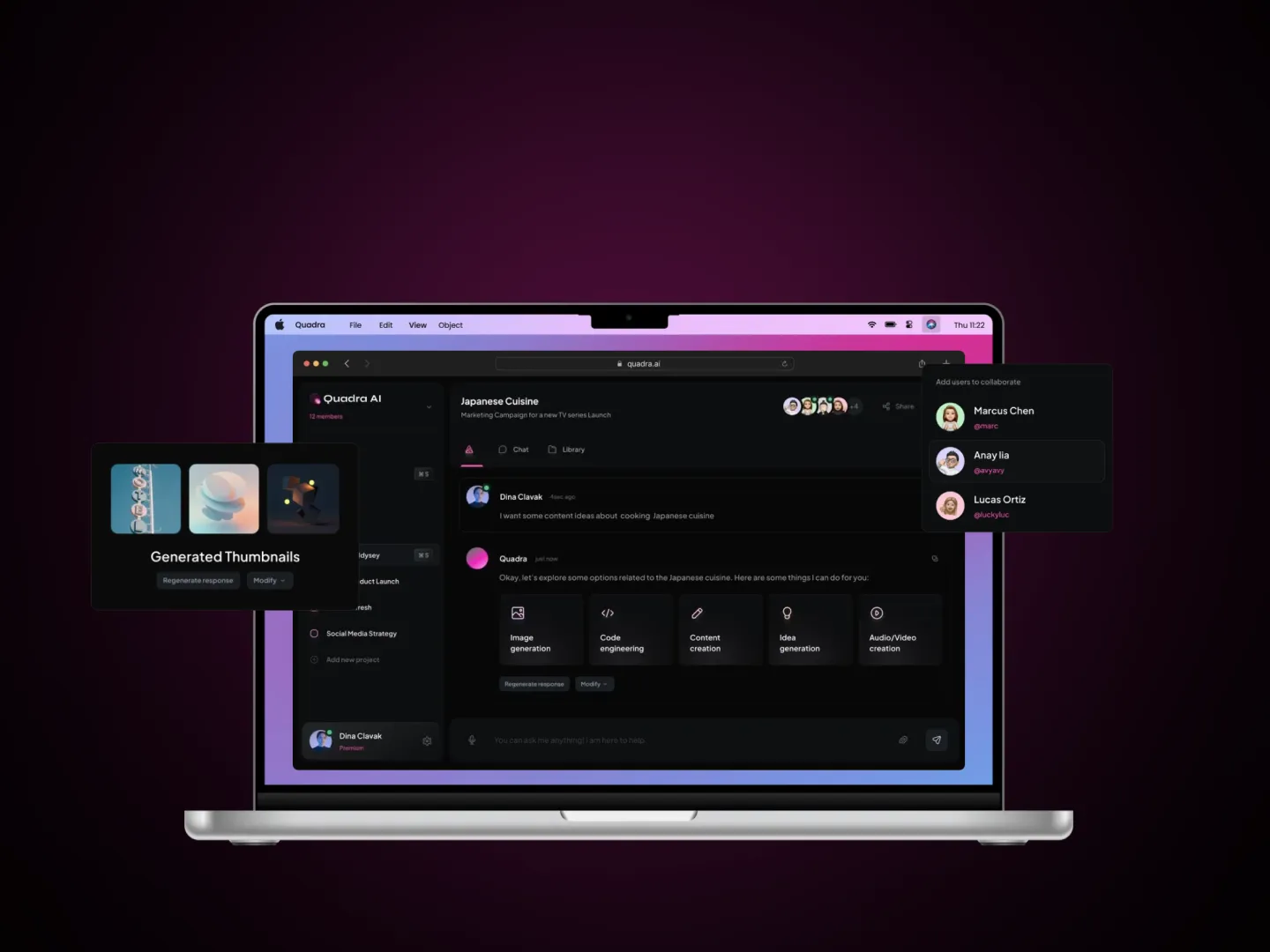

Our client successes

We build our own products. That's why we know how to build yours.

You have a product that works, users, data. The question isn't 'how do we put AI everywhere' — it's 'where does an AI building block actually create value, and what does it cost to run'. We work on existing SaaS or back-office products to add exactly what's useful: semantic search, automated classification, summary generation, smart OCR. No rewrite. We graft, measure, adjust.

Typical use cases

Semantic search

Replace a SQL or Elasticsearch search with multilingual semantic search and ranking tuned to your domain.

Automated classification

Support ticket tagging, e-commerce product categorisation, anomaly detection in form entries — cuts manual work by 60-90%.

Assisted generation

Pre-filling fields (description, email, summary) with human validation — accelerates internal workflows without changing the UI.

Our method in brief

Three steps: (1) Audit — we read the code, watch the flows where humans do repetitive tasks or where UX gets stuck, and quantify the potential impact. (2) Pilot on one case — we integrate the building block on 1 use case in 3-4 weeks and measure gain and inference cost in production. (3) Rollout — observability, quality monitoring, fallback on LLM errors, deployment on the remaining cases. We stay idempotent: you can stop us after any step.

Stack & technologies

Preferred APIs: Claude 4 Sonnet (best quality/price for integrations), GPT-4o-mini, Mistral Small, Gemini Flash. Embeddings: OpenAI text-embedding-3, Voyage AI, Mistral Embed. Vector search: pgvector if Postgres is already in place, otherwise Qdrant or Weaviate. Typical stack: Python (FastAPI) or Node, integrated via webhooks or APIs. We avoid introducing new infra if yours is enough.

// CS Consulting and several SaaS products live with AI building blocks integrated without a rewrite (2024-2025)

Frequently asked questions

Do you touch our existing stack or add a service alongside?+

By default we add a microservice or a dedicated route to isolate cost and observability. If complexity doesn't justify it, we integrate directly into your back-end. The choice depends on your architecture and the maintenance effort.

What's the impact on infrastructure cost?+

Inference cost depends on volume and model: a GPT-4o-mini classifier on 100k requests/month costs about €50. A Claude Sonnet agent on 10k long conversations, more like €800. We size this cost at scoping and optimise (caching, lighter model, batching) if needed.

What if the LLM API goes down?+

Fallbacks are mandatory in all our production integrations: retry, fallback to a secondary model (Mistral if Claude is down, for example), and an application-level fallback (the user sees the previous non-AI behaviour). No critical feature depends on a single provider.

How long to integrate a building block?+

The pilot on one case takes 3-4 weeks (audit + dev + test). Rollout (observability, monitoring, multi-case deployment) adds 4-6 weeks depending on complexity.

Got a project?

Nothing beats a conversation to shape the right solution together.